General Hardware and Software

Fueled by my curiosity and desire to learn, I have worked on countless small hardware and software projects, anything from one hour hacks to things that I have spent time on almost daily for weeks. Here are a few of which are worth mentioning.

piProx

piProx is one of the artifacts of the combination of my curiosity as to the internals of RFID as well as the Linux Kernel. piProx is a Linux Kernel-mode driver for reading Weigand RFID reader signals via the GPIO pins, such as those found on the ubiquitous Raspberry Pi or Beaglebone Black. Being quite familiar with low level user land programming, I had always wanted to explore Linux at a lower level, more specifically how the Linux Kernel and associated device drivers interface with hardware. Furthermore, I had become interested in RFID and its uses and realized that writing a Linux Kernel driver for working with RFID devices was an excellent way to explore both areas while in the process creating something quite useful. My driver is implemented such that when it is loaded, it requests two GPIO pins from the GPIO subsystem, sets thier direction to input, requests interrupts on both of them as well as registers an interrupt handler for both the 1 bit line and the 0 bit line. Next, a character device named /dev/prox is created and registered with udev in order to provide an interface between this driver and the userland. During this entire process, the return codes of all steps are checked and the driver unloads itself cleanly if an error occurs during initialization. The character device provides an easy to use interface that returns either tha last scanned card if read in nonblocking mode or blocks until a card is scanned if read in blocking mode. In either case, the full binary data read from the card is returned to the reader including all parity bits. Additionally, via some cooperation with a very good friend of mine, Peter Chinetti, a series of userland tools were also developed to interpret the HID Corporate 1000 RFID card format and return the card number, facility code, and status of the parity/error detection computation.

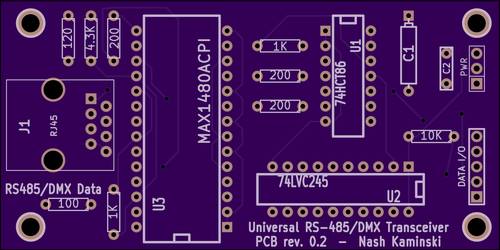

Universal TTL serial -> RS485/DMX interface

The initial inspiration for this project came from my prior interest and experience working with theatrical sound and lighting systems as well as my interest in having the best and most impressive holiday lighting system in the neighborhood. However, the cost of DMX lighting equipment, in particular the nicer control boards was easily in the thousands of dollars and therefore far outside of my approximately $200 per year budget. However, I was aware that DMX used RS-485 serial communication at the physical layer, and that the data link layer protocol was an open standard and therefore well documented, I decided to look into constructing an interface that would allow the TTL serial output of a low cost single board computer or microcontroller such as the Arduino, Raspberry Pi or Beaglebone Black to drive an RS-485 physical link. Furthermore, the DMX data link layer protocol is fairly easily implemented in software and there already exists several implementations of such, including the UART native DMX plugin in Open Lighting Architecture for Linux as well as the DMXSerial implementation for Arduino and other Atmel ATMEGA based microcontrollers. Furthermore, while there are a few preexisting open-source designs for serial to RS485 bridges, they are often lacking in areas that I consider very important such as voltage isolation, standard compliance, and cost. Therefore, I designed my own TTL serial to RS-485 interface around the Maxim MAX1480ACPI fully isolated RS-485 line driver IC as well as various 74 series CMOS logic IC's for signal conversion. This new interface is capable of receiving either a 3.3V or 5V input signal and can be configured to output both a 5V and 3.3V output signal. Additionally, the 3.3V output is designed as a tri-state output to ensure compatibility with the TI AM335x SoC's such as the ones found on the BeagleBoard and Beaglebone Black. Hardware support is also provided for bidirectional communication and the output signal strength is strong enough to drive at a max data rate of 2Mbits/sec or at slower data rates over up to 1000ft of twisted pair cabling. Like nearly all of my other projects, this design is released as open source hardware and is available on my Github page.

Reverse Engineering

In general, I am a huge proponent of open source software and the use of open, well documented standards. However, there are times where there is something that I would like to accomplish that involves working with a proprietary or otherwise undocumented interface. In other cases, I am curious as to why some piece of hardware or software works internally. In any case, while often a very formidable engineering and problem solving task, it is often possible to gain a significant amount of insight into the inner workings of a given system via a variety of often rather complex techniques.

CSRMesh BTLE Protocol + Feit HomeBrite smart bulbs

This endeavour began when I wanted to integrate my home lighting with some of the other connected/IoT devices around my house. I thought it would be cool as well as have significant utility if, for example, I could turn on lights automatically when motion is detected outdoors or when the garage door is opened. Also, I thought is could be cool if I could control the brightness and color temperature based on the time of day. While I am aware that there are solutions such as the LIFX series of WiFi smart bulbs that allow one to do this, the cost of such system was prohibitive. I was also aware at the time that WiFi chipsets were significantly more expensive than Bluetooth, Zigbee, or Z-wave chipsets. Since I had a Raspberry Pi 3 with Bluetooth 4.0/LE support avaliable to me and after doing a little research on the cost of zigbee and z-wave USB dongles, it was evident that Bluetooth LE had the best cost-to-performance ratio in my scenario than other competing protocols. At the time, I also thought that since Bluetooth LE is an open standard, reverse engineering the protocol used by simple Bluetooth LE device would be merely a matter of deducing the format of a data packet containing at most a few bytes of data. Therefore, I kept my eye out for any low cost BTLE smart bulbs and eventually came across the Feit HomeBrite BTLE smart bulbs for $10 at my local hardware store. Control was via BTLE using a rather simplistic appearing and rather buggy/unstable Android or iOS app. I soon bought one and went ahead and set it up via my Nexus 6 smartphone. Once I had it setup, I enabled Android's built-in Bluetooth HCI packet capture funationality and performed a series of operations such as dimming to each of the available brightness options as well as powering on and off the smart bulb several times, such that the packet format should ideally be easy to deduce.

Upon opening the log in Wireshark, I noticed that the data was being sent via Bluetooth LE using the GATT(Generic ATTribute) protocol to 2 handles, 0x0011 and 0x0014. However instead of being able to easily identify the format of the data, I instead noticed that the data packets seemed to be almost completely different from packet to packet, even for packets that contain the exact same command. There was no clear pattern at all to the data, except after further examination the only thing that I did notice was that there were 2 bytes in little endian order at the start of each packet that started at a random value and incremented by one in each packet. I also noticed that there was one byte in the packet that never changed. I then decided to Google the UUID of the BTLE characteristic to see if I could gain any insights as to the operation of the protocol. I got a few hits on Qualcomm/CSR documentation indicating that the protocol in question was Qualcomm/CSR CSRMesh. CSRMesh was touted as a layer on top of BTLE that allows for mesh networking of BTLE devices, as well as strong authentication and encryption of all data exchanged by such devices. CSRMesh was designed to be used by all types of IoT devices such as lighting, HVAC, access control and home security. However, there were few efforts to capture and analyze data transacted using CSRMesh, and none of such efforts were successful in reverse engineering any aspects of the protocol. Therefore, since I had some free time and was also excited by the challenge of being the first to reverse engineer what appeared to be a rather complex crypyographic protocol, I went ahead with spending 1/2 a day or so and seeing if I could make any progress.

My first steps were to begin documenting what I was currently able to determine about the protocol. Public documentation indicated that the encryption algorithms used was AES-128, and that the hash and HMAC algorithms were SHA256. Initial key exchange was also apparently protected by an asymmetric, elliptic curve algorithm. All of these algorithms are known to be very secure so I knew that it would not be possible to attack these algorithms directly. Instead, I focused on the app and aimed to discover how the AES key was derived and how the packets are formed and digitally signed. Additionally, I was going to see if I could find any flaws in the implementation of the protocol that weakened the strength of the crypographic functions. After using Apktool to disassemble the Android application into mnemonic representations of the Dalvik JVM bytecodes referred to as smali. I then set to work looking to find routines that utilized cryptographic functions, since these were likely candidates for routines that will be used when deriving the AES key from the provided PIN as well as encrypting and signing packets. While finding these functions was quite easy, it proved to be very difficult to actually reverse engineer the logic in such routines. The entire csr Java package was heavily obfuscated, with all function and class names replaced with single letters and the functionality heavily fragmented across many static methods in many small subclasses, apparently in an attempt to make reverse engineering as difficult as possible. However, after about an hour or so of analysis, I had my first major breakthrough. I had discovered that the network key is formed by concatenating the ASCII representation of the PIN with a constant, hashing the result, and passing the hash through a reversing and truncation function. Additionally, this discovery reveals a major vulnerability in the protocol since there are only 10^4 possible keys, all of which can be found by iterating the pin derivation function from 0000 to 9999 and the constant bytes in the packet can be used to determine if a packet has or has not been decrypted correctly. Owing to the difficulty of reversing the obfuscated Java, I did a little more research on smali debugging/analysis and discovered that there are open source utility classes implementing methods designed to be easily callable by adding 1-2 lines of smali code to a target and convert various types of variables to strings and output them to the Android system log. I opted to use the open source utility class IGLogger to assist with the analysis of the much more complex packet forming and packet signing functions. After a few more hours of a combination of logging the arguments of crypto functions, tracing through the obfuscated smali code, as well as a fair bit of reasoning and correlation between inputs to crypto functions and logic derived by direct reversing of the smali code, I was successful in the most challenging hardware/software hacking endeavor to date and was able to also reverse engineer the packet forming and packet signing functions, allowing me to be able to control CSRMesh devices using my implementation of the protocol. This then made my newly written Python code the first, and currently only, open source reverse engineered implementation of the CSRMesh bridge protocol. The Python module that I have developed, along with more extensive protocol documentation is available via Github, and the python module is also listed in the Python Package Index.

Stratasys 3D Printer Network Protocol

During my time as a network engineer working for the IIT Idea Shop, I noticed that 3D printing on the Stratasys uPrint was an incredibly popular means of fabrication. Additionally, many complex jobs would often take several hours to complete and I noticed that there was continually a large number of inquiries as to the completion status of a given part or where a given job was in the machine's queue. Furthermore, the only means of interfacing with the machine was via a provided Windows application, which allowed users to upload jobs, as well as view the status of the machine and job queue. If this status information could be made easily available to users, for example via a web page, users would be able to access this info easily themselves. However, the network protocol used between the uPrint and its control program was proprietary and therefore completely undocumented. Therefore, it would be necessary to reverse engineer this network protocol in order to be able to extract the wanted data. I began the process by using Wireshark to capture the binary data of all network packets sent to and from the machine while the control software was running. The result of such was a large amount of data, most of which was sent to and from port 53742 on the machine. Some of the data, when interpreted as ASCII text, contained readable portions while other portions of the data contained seemingly random bytes. The question also remained how the protocol worked overall. After further analysis, it was noted that the protocol used a request/response system, where all commands and responses were null terminated C strings, padded to 64 bytes. The argument list was then terminated by sending the negative acknowledgement, NA. Furthermore, the commands were sent such that the command was sent first, then each of its arguments is sent in order as a separate 64 byte message. Once a full command and arguments was sent, the command and arguments were then sent back to the sender and had to be acknowledged individually with an acknowledgement, 'OK'. Furthermore, when a command is sent that will return more than 64 bytes, the machine will respond with a packet containing a string comprised of only digits. In this case, the numeric value of the string is the size of the data payload that is to be returned. If this is acknowledged by replying with 'OK', the next N bytes of data returned will be the data returned by the command. Putting this all together, sending the 'GetFile' command followed by the argument 'status.sts' and 'NA', replying OK to all responses and finally reading N bytes from the socket will result in a large blob of ASCII test, formatted in a means not terribly different from JSON, but too heavily mangled to fix using string operations. To solve this issue, a custom parser was developed and implemented in Python to parse the received data into a Python dict. Finally, the dict was re-serialized into proper JSON and the data was presented via a web page as well as in raw form via a RESTful service, allowing instant access to this data feed to all users of the prototyping lab without the need for manual intervention. I have also made the code available via Github.

Illinois Tech Robotics

Since my first week as a student at the Illinois Institute of Technology, I have taken part in the student organization Illinois Tech Robotics. I first became interested in ITR because I really like building things and applying my knowledge as well as working with others while doing so. Throughout my time at IIT, I have worked on a plethora of projects in association with ITR and would like to highlight some of the best and most exciting.

Centrifugal Pumpkin Launcher

Each year during the fall, IIT's biomedical engineering society hosts a pumpkin launch competition where teams of students or alumni build machines to throw pumpkins across the school's baseball field. The performance of the launchers is scored in 3 categories: distance, accuracy and crowd favorite. ITR has historically had some of the biggest and best performing launchers in the competition. In years past, many of ITR's launchers were variations of catapults. However, owing to the shift in ITR from mostly mechanical engineers to primarily computer engineers, we thought with our in depth knowledge and interest in computers and electronics that although it would be a massive engineering project, a computer driven pumpkin launcher could likely outperform the competing mechanical launchers with ease. After discussing the pros and cons of many designs, we settled on the centrifugal design. In a centrifugal launcher, both the rotational speed of the launch arm as well as release point during the rotation can be controlled via software, ideally allowing for excellent accuracy. Furthermore, unlike catapults which must impart all of the energy to the pumpkin within a matter of a fraction of a second, centrifugal launchers can add very large amounts of energy to the pumpkin slowly by spinning the arm progressively faster through many rotations before releasing. While the first couple revisions of this launcher did not quite perform as expected, they provided valuable insight into the design of Mach 4 as well as taking 1st place for accuracy the 2nd year and 1st place for distance last year. After analysing all of the shortcomings discovered in the early revisions of this launcher and determined to make this year's launcher not just perform acceptably, but perform far better than any other design made, it was evident that more engineering as well as the contribution from a larger knowledge and interest base was needed. Mach 4 was the product of the work of many interdisciplinary individuals incorporating newly acquired skills in design and simulation, as well as the lessons learned during past design revisions. ITR's 2016 centripetal launcher, Mach 4, stands 14 feet tall with its arm vertical and is powered by a 5 horsepower industrial motor capable of spinning the arm to speeds in excess of 180 RPM giving a tangential release speed of 90-100 MPH. The computerized release system uses an industrial optical encoder to track the position of the arm in its rotation to less than 1/2 of a degree and a newly redesigned electrically triggered pneumatic release system consisting of 3, independent 3 ring release systems along with a new retention net to precisely as well as more safely release the upwards of 1000lbs of tension on the pumpkin and retention system at precisely the correct moment. This new design performed very well, with approximately 90% accuracy and a max launch distance of 475 feet, winning ITR 1st place for distance and 2nd place for accuracy.

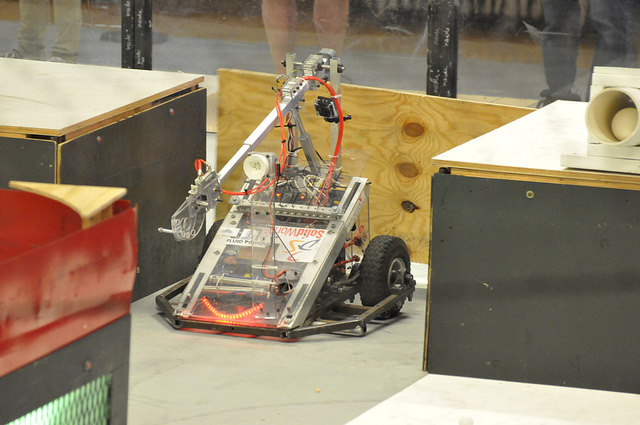

ITR Goliath

ITR Goliath is a competitive robot designed to compete in the annual Midwest Regional Design Competition held at UIUC. When I first joined ITR, ITR Goliath was an inactive project with little more than a frame and a few small drive motors. I was very interested in leading and continuing the project with several other students who were also interested in starting an almost brand new project. At first it seemed like getting ITR Goliath competition ready would take a substantial amount of time. Furthermore, I quickly realized that while I was very confident in my knowledge of certain areas, such as electrical and software, while quite uncertain in others, namely mechanical. Therefore, I realized that it would take the efforts of a sizable group of individuals with knowledge and interests in a variety of disciplines in order to successfully overcome the engineering challenges present in such a design. Over the course of 4 competitions, some features of Goliath have changed significantly while others have remained relatively untouched, with Goliath performing better each time. Many of these decisions were influenced by new members joining the project and older members graduating as well as mainly by the lessons learned during the last competition. Things that worked well were rarely modified while areas were flaws were discovered were often reworked significantly. In 2014 and 2015, ITR Goliath won the final demolition round of the competition and in 2016 took first place in the competition.